Agentic AI Workflow Recovery focuses on restoring the critical files, logs, datasets, and configurations that keep autonomous, agent-based AI systems running smoothly. When an agentic AI workflow breaks due to corrupted data, accidental deletion, or storage failure, you can lose not only outputs but also the context that agents need to make decisions. Understanding Agentic AI Workflow Recovery helps you trace what went wrong, bring back missing assets, and quickly return your AI agents to a stable, reliable state. With the right recovery strategy and tools like Recoverit, you can minimize downtime, protect valuable training data, and keep complex AI pipelines working as intended.

Try Recoverit to Perform Data Recovery

In this article

What Is Agentic AI Workflow Recovery

AI workflow recovery in an agentic context is the process of restoring the data, configuration, and orchestration logic that connect multiple autonomous agents into a functioning pipeline. Instead of focusing only on model checkpoints or final outputs, Agentic AI Workflow Recovery looks at the full lifecycle of tasks and the artifacts each step depends on.

In a typical agentic system, multiple agents collaborate to gather data, transform it, call external tools, write intermediate files, and then make decisions. If any of the underlying assets are lost or corrupted, the workflow can fail silently, produce low‑quality results, or become impossible to audit. Effective agentic AI Workflow Recovery aims to:

- Identify which assets (datasets, logs, prompt templates, config files, scripts) went missing or became invalid.

- Restore those assets from backups, storage snapshots, or data recovery tools like Recoverit.

- Reconstruct enough context so agents can resume work with minimal reprocessing and without losing traceability.

Because agentic AI systems are highly iterative and stateful, protecting and recovering the state of the workflow is as important as protecting the models themselves. This is why a clear recovery strategy is essential for any serious AI pipeline in production.

How Does Agentic AI Workflow Recovery Work

Agentic AI Workflow Recovery usually follows a structured, repeatable process so teams can respond quickly when something breaks. While specific implementations vary, a common pattern includes these stages:

- Detect the failure and its impact scope. Monitoring, alerts, and anomaly detection highlight when an agent, job, or pipeline stops behaving as expected. Engineers determine which stages and assets are affected.

- Preserve the current state. Before making changes, teams snapshot the remaining data, logs, and configuration so they can analyze root causes later and avoid making the situation worse.

- Locate missing or corrupted assets. Using observability data, run histories, and file indices, teams identify which datasets, artifacts, or configuration files are damaged or have disappeared from storage or repositories.

- Recover underlying data. Tools such as Recoverit scan disks, servers, NAS, or external drives to restore deleted or corrupted items. Version control and object storage snapshots are also used at this stage.

- Validate integrity and consistency. Recovered files are checked for completeness, correct schema, hash or checksum matches, and compatibility with the current agent logic or orchestration code.

- Rebuild the workflow state. Pipelines are partially or fully re‑run from the last known good checkpoint. Agents may replay events from logs to reconstruct context or regenerate intermediate artifacts.

- Document and harden. Post‑incident reviews update runbooks, backup policies, and monitoring dashboards so similar failures are faster to detect and easier to fix.

Central to this process is having reliable storage and a capable recovery solution. When deletion, file system errors, or hardware issues remove essential assets, a data recovery tool can be the difference between a quick rollback and a weeks‑long rebuild of your AI pipeline.

What are the Types of Agentic AI Workflow Recovery

Agentic AI systems can break in many ways, from small configuration issues to large‑scale storage failures. Understanding the typical failure categories and common recovery strategies helps you design resilient workflows and choose the right response when issues occur.

Categories of Agentic AI Workflow Failures

Most agentic AI workflow incidents fall into a few high‑level categories, each requiring a different recovery focus.

| Failure Category | Impact on Agentic AI Workflow |

|---|---|

| Data loss or corruption | Missing datasets, broken parquet/CSV files, truncated logs, or corrupted vector stores cause agents to make poor decisions or fail at load time. |

| Configuration and orchestration errors | Changed environment variables, broken YAML/JSON configs, or damaged orchestration DAGs lead to skipped tasks, infinite loops, or mis‑ordered agent calls. |

| Code and dependency issues | Deleted scripts, incompatible library versions, or overwritten model artifacts prevent agents from executing tools or loading models correctly. |

| Infrastructure and storage failures | Disk crashes, NAS outages, or cloud storage misconfigurations remove access to shared directories, model registries, and artifact stores. |

Within these categories, you can also distinguish between:

- Soft failures where the workflow still runs but produces degraded or untrustworthy outputs.

- Hard failures where agents or orchestration platforms abort execution entirely.

The recovery path depends on whether you primarily need to repair trust in outputs, restore missing files, or rebuild infrastructure capacity.

Recovery Strategies for Agentic AI Workflows

Different failure categories call for different ai workflow recovery techniques. Common strategies include:

- File‑level recovery. When project folders, notebooks, or logs are accidentally deleted, tools like Recoverit can scan disks to restore those assets without impacting the rest of the stack.

- State and checkpoint restoration. For long‑running agentic processes, regular checkpoints of models, embeddings, and intermediate data allow partial replays from the last known good state.

- Environment reconstruction. Rebuilding containers, virtual environments, or infrastructure as code stacks ensures agents run on a consistent, clean base image after a failure.

- Schema and contract repair. When structure changes break downstream agents, rolling back schema versions or adding compatibility layers can restore flow without re‑ingesting all data.

- Hybrid restore plus re‑run. In complex pipelines, teams often restore critical assets from backup, then re‑run only a subset of tasks or agents to regenerate missing artifacts.

Because agentic AI systems are highly interconnected, it is common to combine these strategies: recovering lost data files, restoring orchestration DAGs, and re‑deploying updated containers at the same time to fully bring the workflow back.

Practical Tips for Agentic AI Workflow Recovery

Good recovery outcomes start long before anything goes wrong. By preparing your workflows and infrastructure with recovery in mind, you make it far easier to respond quickly when you do experience agentic ai data loss or corruption.

- Map and document your workflows. Keep visual diagrams and text documentation that show which agents depend on which datasets, tools, configs, and external services.

- Separate critical and non‑critical assets. Identify the minimum set of files and services required to run your core pipeline so you can prioritize their protection and recovery.

- Use version control for everything text‑based. Store code, prompts, configs, and orchestration DAGs in Git (or similar) so you can roll back quickly after a bad change.

- Centralize and retain logs. Configure agents and tools to write detailed logs to durable storage. These logs are essential for root‑cause analysis and for replaying workflows.

- Implement layered backups. Combine local snapshots, off‑site or cloud backups, and object storage versioning for datasets and large artifacts.

- Test your recovery plan. Run fire‑drill exercises where you intentionally simulate data loss and practice using tools like Recoverit to restore critical assets.

- Harden shared storage. Use RAID, checksums, and health monitoring on disks and NAS systems that store AI datasets and model artifacts.

- Protect against accidental deletion. Apply access controls, soft‑delete policies, and recycle bins on shared project folders and storage buckets.

- Automate integrity checks. Schedule jobs that validate dataset schemas, run hash checks, or perform small sample inferences to detect silent corruption early.

- Keep clear runbooks. Maintain step‑by‑step guides that specify who does what when an incident occurs, including instructions for scanning and restoring files.

How to Use Recoverit to Recover Lost Data

Recoverit is a professional data recovery solution from Wondershare that helps you restore deleted, lost, or corrupted files from computers, external drives, servers, and more, making it ideal for rebuilding broken agentic AI workflows. By visiting the Recoverit official website you can download the tool and use its guided process to bring back datasets, configuration files, logs, and other assets that your AI agents rely on.

Key Features Offered by Recoverit

- Supports recovery from PCs, servers, external drives, NAS, and memory cards used to store agent code, datasets, and artifacts.

- Restores many file types used in AI projects, including documents, images, videos, archives, and serialized model files.

- Offers a preview feature so you can check scripts, notebooks, and dataset samples before final recovery to ensure integrity.

Step-by-Step Guide on How To Recover Lost Data

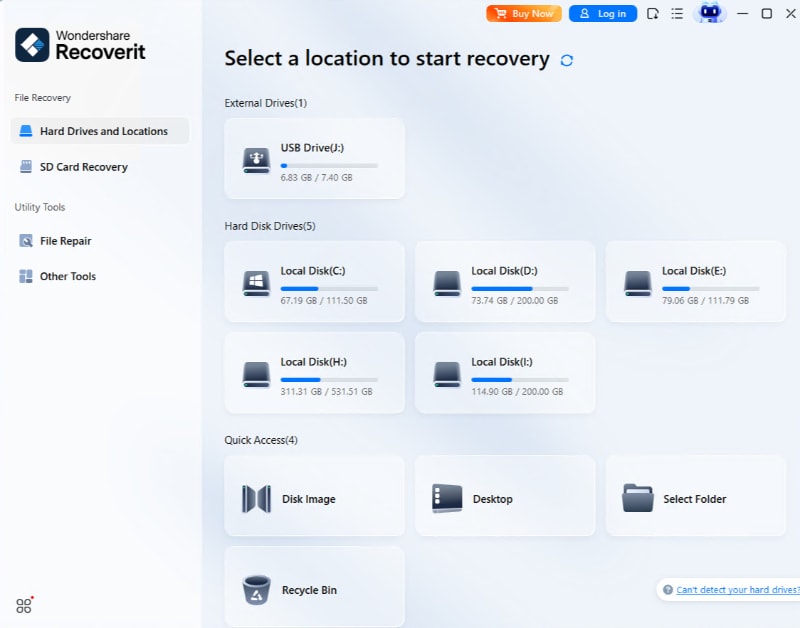

1. Choose a Location to Recover Data

Start Recoverit and look at the main screen to find where your AI workflow files were stored, such as a system drive, external disk, or specific folder. Highlight the exact location that contained your agents code, datasets, or logs, then click the Scan button to begin searching for lost items in that area.

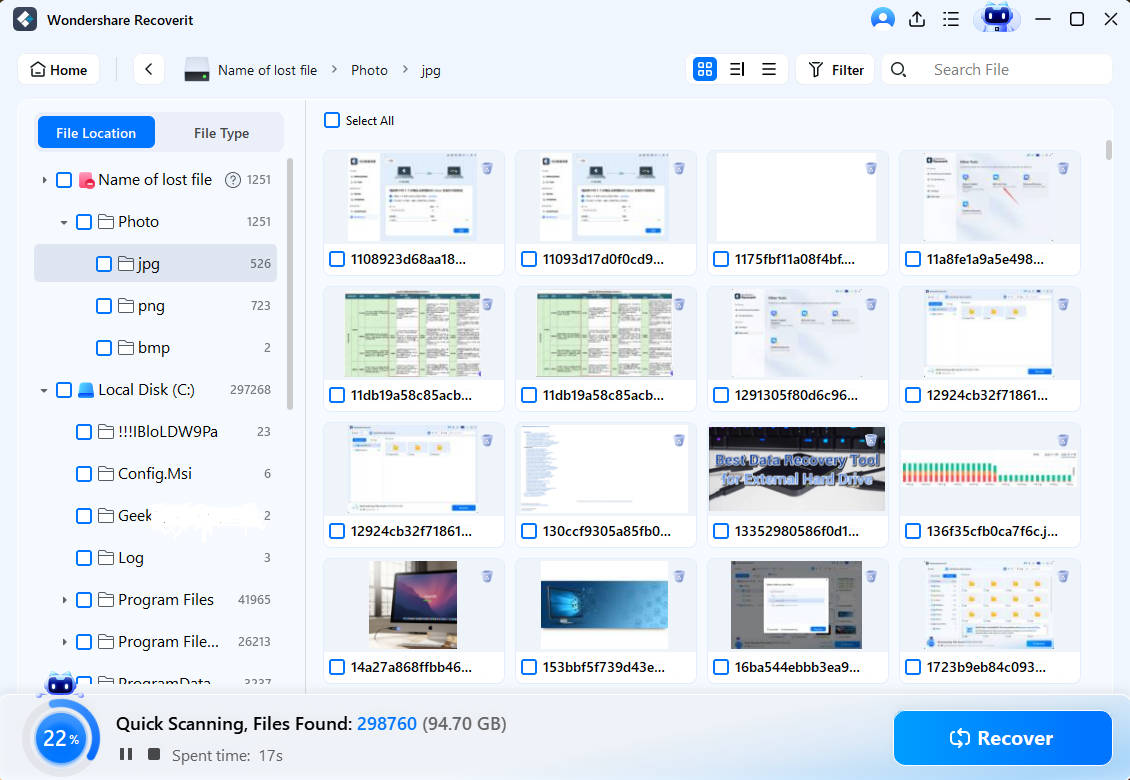

2. Deep Scan the Location

Recoverit now performs a thorough scan of the chosen location, automatically looking for deleted, lost, or damaged files linked to your agentic AI workflow. You can watch the scan progress in real time, pause if needed, or filter by file type to quickly narrow down to project folders, configuration files, and log outputs that your AI agents require.

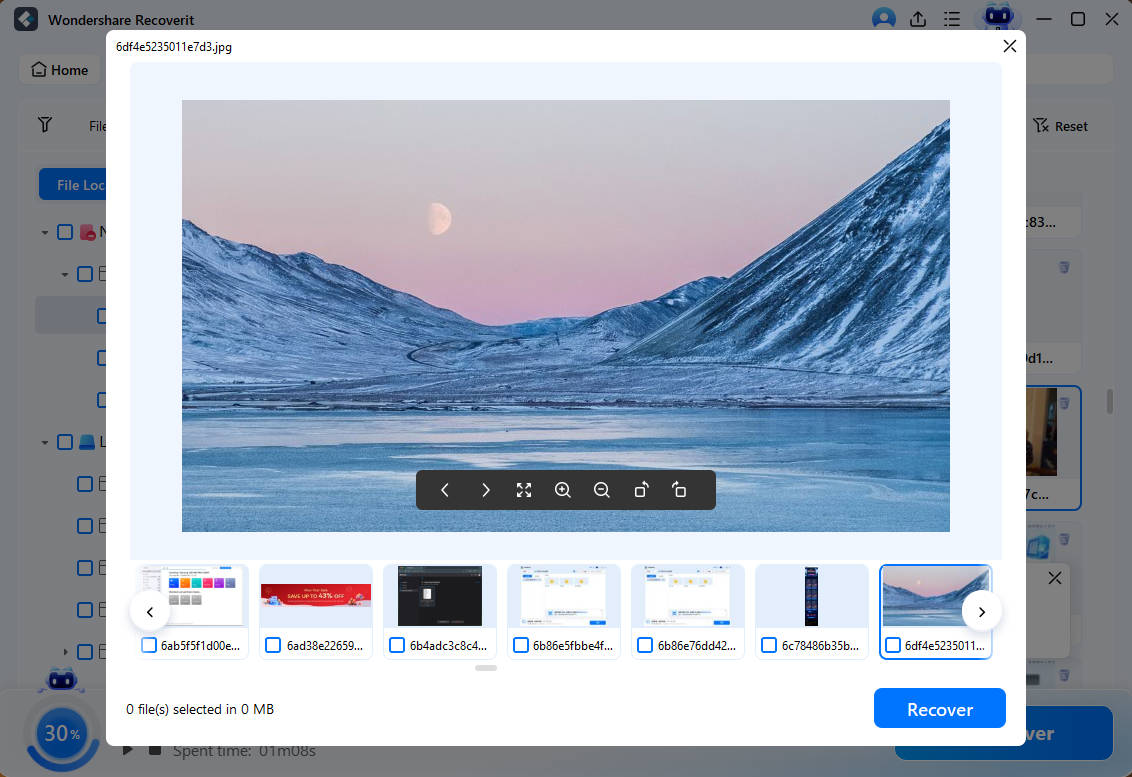

3. Preview and Recover Your Desired Data

When the scan finishes, browse through the results list and use the preview window to open important files, such as scripts, notebooks, configuration documents, and dataset snapshots, to confirm they are intact. Select the items you want to restore, click the Recover button, and save them to a safe storage location that is different from the original path to avoid overwriting remaining data.

Conclusion

Agentic AI Workflow Recovery is about more than fixing an error message. It is the process of restoring the data, context, and structure that your autonomous AI agents rely on to operate reliably. By mapping your workflows, protecting critical assets, and preparing for failure, you can cut downtime and avoid expensive rework when something goes wrong.

With a dedicated data recovery tool like Recoverit, you can quickly bring back lost datasets, configuration files, and logs that underpin your agentic AI pipelines. Combining technical safeguards with a clear recovery playbook ensures your AI projects remain resilient, traceable, and ready to scale.

Next: Perplexity Ai Recovery

FAQ

-

What is Agentic AI Workflow Recovery in simple terms?

Agentic AI Workflow Recovery is the process of restoring the files, settings, and data flows that allow autonomous AI agents to operate correctly after a failure, data loss event, or corruption issue. -

What causes data loss in agentic AI workflows?

Common causes include accidental deletion of project folders, corrupted storage, version control mistakes, misconfigured automation, failed container or VM snapshots, and power or hardware failures affecting shared storage or log directories. -

Can I fully rebuild an agentic AI workflow after data loss?

In many cases you can, especially if you have backups, version control, and detailed logs. A data recovery tool like Recoverit can help restore missing files so you can reconstruct pipelines and agent configurations more completely.

ChatGPT

ChatGPT

Perplexity

Perplexity

Google AI Mode

Google AI Mode

Grok

Grok